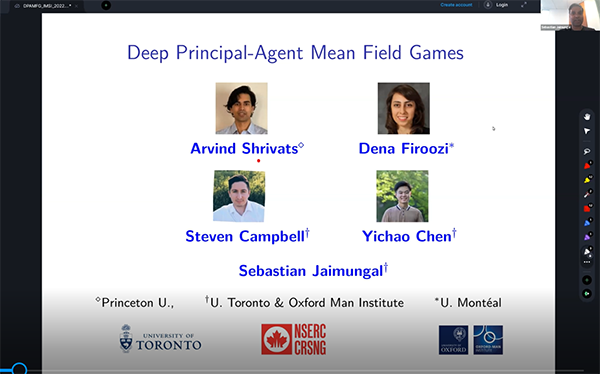

Deep Learning for Principal-Agent Mean Field Games

Presenter

May 24, 2022

Abstract

Here, we develop a deep learning algorithm for solving Principal-Agent (PA) mean field games with market-clearing conditions -- a class of problems that have thus far not been studied and one that poses difficulties for standard numerical methods. We use an actor-critic approach to optimization, where the agents form a Nash equilibria according to the principal's penalty function, and the principal evaluates the resulting equilibria. The inner problem's Nash equilibria is obtained using a variant of the deep backward stochastic differential equation (BSDE) method modified for McKean-Vlasov forward-backward SDEs that includes dependence on the distribution over both the forward and backward processes. The outer problem's loss is further approximated by a neural net by sampling over the space of penalty functions. We prove the methodology converges to the problem’s solution and apply our approach to a stylized PA problem arising in Renewable Energy Certificate (REC) markets, where agents may rent clean energy production capacity, trade RECs, and expand their long-term capacity to navigate the market at maximum profit. Our numerical results illustrate the efficacy of the algorithm and lead to interesting insights into the nature of optimal PA interactions in the mean-field limit of these markets. [this is joint work with Steven Campbell, Yichao Chen, and Arvind Shrivats]